Early on Easter Sunday, the Large Hadron Collider’s second run got underway, when proton beams began rotating in the 27-kilometre ring for the first time in two years. Over the coming weeks, the beams will be accelerated to speeds close to the speed of light, running at the unprecedented energy of 13 Terra-electron-volts (TeV), well above the 8 TeV level of the last run, which discovered the long-sought Higgs boson in 2012.

The new run will subject the Standard Model of particle physics to its toughest tests yet, and may help identify some of the fundamental forces of nature that the Standard Model does not include. With 13 TeV proton-proton collisions expected before summer, the LHC experiments will soon be exploring uncharted territory in particle physics.

Cambridge researchers at CERN are playing a major part in preparing the ATLAS detector – the largest of LHC’s seven particle detectors – for action with new upgraded systems ready to go to work as soon as the beam start to collide. All the preparations are in place to being to analyse the data and early results could be expected before the end of the year if all goes well.

“The current Standard Model explains the known particles and forces, and the discovery of the Higgs completed that picture,” said Professor Andy Parker, Head of the Cavendish Laboratory at the University of Cambridge, and one of the founders of ATLAS. “But the Standard Model does not explain dark matter, which is believed to make up most of the Universe, nor Dark Energy, a mysterious force driving the galaxies ever further apart.”

The answers to these problems in cosmology might lie in the realm of sub-atomic physics studied at CERN. For example, the LHC might be able to produce dark matter particles, which would be glimpsed in the debris of collisions detected by the ATLAS and CMS experiments.

“Even more exciting is the possibility that the Universe could have more than three space dimensions, and that other spaces are hidden all around us,” said Parker. “This could also be revealed at CERN by the production and decay of microscopic quantum black holes, a particular interest of the Cambridge researchers at CERN. Detailed studies of the Higgs boson are also going to test our understanding of the Standard Model, with any unexpected effects leading us towards new physics. The upgrade of the LHC will allow scientists to search for new discoveries which have so far been out of reach.”

The upgrade was a Herculean task. Some 10,000 electrical interconnections between the LHC’s superconducting dipole magnets were consolidated. Magnet protection systems were added, while cryogenic, vacuum and electronics were improved and strengthened. Additionally, the beams will be set up in such a way that they will produce more collisions by bunching protons closer together; with the time separating bunches being reduced from 50 nanoseconds to 25 nanoseconds.

After the discovery of the Higgs boson in 2012 by the ATLAS and CMS experiments, physicists will be putting the Standard Model of particle physics to its most stringent test yet, searching for new physics beyond this well-established theory describing particles and their interactions.

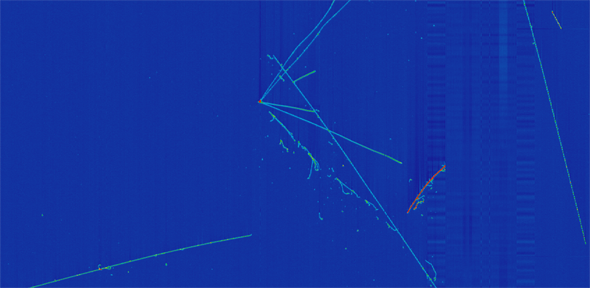

With superconducting magnets cooled to the extreme temperature of -271°C, the LHC is capable of simultaneously circulating particles in opposite directions, in tubes under ultrahigh vacuum, at a speed close to that of light. Gigantic particle detectors, located at four interaction points along the ring, record collisions generated when the beams collide.

In routine operation, protons cover some 11,245 laps of the LHC per second, producing up to 1 billion collisions per second. The CERN computing centre stores over 30 petabytes of data from the LHC experiments every year, the equivalent of 1.2 million Blu-ray discs.

After two years of intense maintenance and consolidation, and several months of preparation for restart, the Large Hadron Collider, the most powerful particle accelerator in the world, is back in operation after a major upgrade.

The text in this work is licensed under a Creative Commons Attribution 4.0 International License. For image use please see separate credits above.